Top Tools Every Data Scientist Must Learn in 2026

Discover the top data science tools for 2026, including Python, Spark, and TensorFlow, with real-world examples to boost your career in data science.

Data science is no longer just about coding or analyzing numbers. In 2026, companies expect data scientists to handle everything from collecting raw data to building intelligent systems that drive business decisions.

If you are planning to build a career in data science or upgrade your skills, learning the right tools is the key difference between average and high-paying roles. The demand for skilled data scientists is growing fast. According to a 2026 report by Statista, the global data science platform market is expected to cross $322 billion by 2026.

This blog will walk you through the most important tools every data scientist must learn in 2026, along with real-world examples and practical insights.

Why Tools Matter in Data Science

Data science is not just about understanding concepts it is about applying them in real-world scenarios. In today’s data-driven economy, tools are the backbone of every data science workflow. From collecting raw data to deploying machine learning models, tools make the entire process efficient, scalable, and impactful.

Without the right tools, even the most skilled data scientist cannot deliver meaningful results.

The Role of Tools in Modern Data Science

Data science involves handling massive volumes of structured and unstructured data. Tools enable professionals to process, analyze, and extract insights from this data effectively.

Without tools:

- Large datasets cannot be processed efficiently

- Data visualization becomes difficult and unclear

- Building and training machine learning models is nearly impossible

- Deploying solutions in real-world environments is not feasible

Modern tools like Python, SQL, TensorFlow, and cloud platforms help automate tasks, improve accuracy, and speed up workflows. They transform theoretical knowledge into practical solutions that businesses can use.

The data science tools market itself is steadily expanding, expected to grow significantly as organizations rely more on analytics and AI-driven decision-making.

The global data science platform market is expected to grow from $204 billion in 2026 to over $631 billion by 2030, showing massive industry demand. (Source: Research and markets)

Refer to these articles:

- Best Python Web Frameworks for Beginners and Professionals in 2026

- Top Exploratory Data Analysis (EDA) Techniques Every Data Scientist Must Master in 2026

- Top Anomaly Detection Techniques in Data Science for 2026

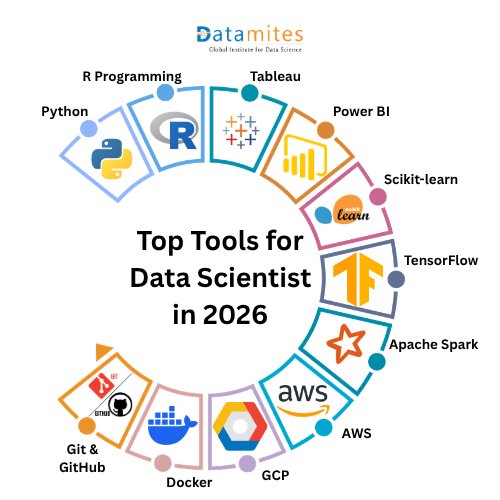

Top Tools Every Data Scientist Must Learn in 2026

Data science continues to evolve rapidly, and staying updated with the right tools is critical for career growth. In 2026, companies are not just looking for theoretical knowledge—they demand hands-on expertise in tools that can handle real-world data challenges, automation, and scalability.

Below are the most essential tools every data scientist must master in 2026, along with their importance and real-world applications.

1. Python - The Backbone of Data Science

Python remains the most dominant programming language in data science.

Why Python?

- Easy to learn and beginner-friendly

- Massive community support

- Versatile and scalable

Key Libraries:

- Pandas – Data analysis and manipulation

- NumPy – Numerical computations

- Matplotlib – Data visualization

- Scikit-learn – Machine learning

Market Insight:

Recent developer surveys indicate that over 70% of data scientists use Python daily, making it the most in-demand skill in job postings.

Real-Life Example:

Retail companies use Python to analyze customer purchase behavior and build recommendation systems that increase sales and customer retention.

2. R Programming - The Statistical Powerhouse

R is widely used in research, academia, and advanced statistical modeling.

Why Learn R?

- Excellent for statistical analysis

- Superior data visualization capabilities

- Preferred in research-driven industries

Market Insight:

R continues to dominate in sectors like healthcare and finance, where statistical accuracy and data interpretation are critical.

Real-Life Example:

Healthcare researchers use R to analyze patient datasets and predict disease outbreaks or treatment outcomes.

3. Tableau - Business Intelligence Made Easy

Tableau is one of the leading tools for data visualization and business intelligence.

Key Features:

- Drag-and-drop interface

- Interactive dashboards

- Real-time analytics

Market Insight:

The BI tools market is growing rapidly, and Tableau is consistently ranked among the top 3 visualization tools used by enterprises.

Real-Life Example:

Sales teams use Tableau dashboards to track KPIs, monitor performance, and identify growth opportunities.

4. Power BI - Enterprise-Level Analytics Tool

Power BI is a Microsoft product widely used in corporate environments.

Why It Matters:

- Seamless integration with Microsoft ecosystem

- Real-time data monitoring

- Cost-effective solution

Market Insight:

Power BI has seen a significant increase in adoption, especially among Fortune 500 companies due to its integration capabilities.

Real-Life Example:

Organizations use Power BI to monitor employee productivity, financial reports, and operational efficiency.

5. Scikit-learn - Machine Learning Simplified

Scikit-learn is a must-have library for building machine learning models.

What You Can Do:

- Classification and regression

- Clustering

- Model evaluation

Market Insight:

Machine learning adoption has surged, with over 60% of companies using ML models in production environments.

Real-Life Example:

Banks use Scikit-learn to detect fraudulent transactions by analyzing unusual patterns in financial data.

6. TensorFlow - Powering AI and Deep Learning

TensorFlow is widely used for deep learning and artificial intelligence applications.

Key Uses:

- Neural networks

- Image recognition

- Natural language processing

Market Insight:

AI adoption is accelerating, with over 75% of enterprises investing in AI technologies, increasing demand for TensorFlow expertise.

Real-Life Example:

Voice assistants and recommendation engines rely on TensorFlow to understand user behavior and deliver accurate responses.

7. Apache Spark - Big Data Processing at Scale

Apache Spark is essential for handling large-scale data processing.

Why Learn Spark?

- Fast processing speed

- Handles big data efficiently

- Supports real-time analytics

Market Insight:

With data volumes increasing exponentially, Spark has become a core tool in big data ecosystems.

Real-Life Example:

E-commerce companies use Spark to process millions of transactions and generate real-time insights for pricing and inventory.

8. AWS (Amazon Web Services) - Cloud Computing Leader

Cloud platforms are now a fundamental part of data science workflows.

Important Services:

- S3 – Storage

- EC2 – Compute

- SageMaker – Machine learning

Market Insight:

AWS holds the largest cloud market share, with over 30% global cloud adoption, making it a critical skill for data professionals.

Real-Life Example:

Startups use AWS to build scalable data pipelines without investing in physical infrastructure.

9. Google Cloud Platform (GCP) - Advanced Data Analytics

GCP is another powerful platform for data engineering and machine learning.

Key Tools:

- BigQuery – Data warehousing

- Vertex AI – Machine learning

Market Insight:

GCP adoption is growing rapidly due to its strong AI and analytics capabilities.

Real-Life Example:

Companies use BigQuery to analyze massive datasets in seconds, enabling faster decision-making.

10. Docker - Simplifying Deployment

Docker is essential for deploying data science applications consistently.

Why It Matters:

- Environment consistency

- Easy scaling and deployment

- Reduces compatibility issues

Market Insight:

With the rise of MLOps, containerization tools like Docker are becoming standard in production environments.

Real-Life Example:

Data scientists package machine learning models into Docker containers to ensure they run seamlessly across different systems.

11. Git & GitHub - Collaboration and Version Control

Version control tools are crucial for teamwork and project management.

Benefits:

- Track code changes

- Collaborate efficiently

- Manage large projects

Market Insight:

More than 90% of development teams use Git-based platforms for collaboration.

Real-Life Example:

Data science teams use GitHub to collaborate on projects, maintain version history, and deploy code efficiently.

Refer to these articles:

- Top 10 Best IDEs for Data Science in 2026

- How Data Scientists Can Build AI Agents Using Python

- How to Become a Data Scientist With No Experience

Real-Life Use Case: End-to-End Data Science Project

In today’s data-driven economy, building a scalable end-to-end data science project is essential for businesses aiming to stay competitive. One of the most impactful real-world applications is an E-commerce Recommendation System, which leverages modern data science tools and cloud technologies to deliver personalized customer experiences and drive revenue growth.

This end-to-end data science workflow demonstrates how multiple tools and technologies integrate to build scalable, intelligent systems.

Scenario: E-commerce Recommendation System

An e-commerce company wants to recommend products based on user behavior, purchase history, and browsing patterns. Here’s how a complete data science pipeline works in real-world systems:

1. Data Collection → Cloud Storage (AWS S3)

The journey begins with collecting massive volumes of structured and unstructured data such as:

- User clicks and search queries

- Purchase history

- Product metadata

This data is stored in cloud platforms like Amazon S3, enabling scalable and secure storage for big data workloads.

Why it matters: Over 94% of enterprises now use cloud services, making cloud storage a backbone of modern data systems.

The recommendation engine market is expected to grow from $6.84 billion in 2025 to $139.58 billion by 2035, driven by personalized e-commerce experiences. (Source: Fundamental business insights)

2. Data Processing → Big Data Tools (Apache Spark)

Raw data is processed using distributed frameworks like Apache Spark, which handles:

- Data cleaning

- Transformation

- Real-time streaming

Industry insight: Around 90% of AI and machine learning projects depend on data engineering pipelines, highlighting the importance of tools like Spark.

3. Data Analysis → Python (Pandas)

Processed data is analyzed using Python libraries like Pandas, where data scientists:

- Perform exploratory data analysis (EDA)

- Identify trends and patterns

- Prepare features for modeling

Market fact: Python remains the most widely used language, with 34% of data scientists relying on it for analytics tasks.

4. Data Visualization → Tableau

Insights are visualized using tools like Tableau, enabling stakeholders to:

- Understand customer behavior

- Track KPIs and trends

- Make data-driven decisions

Companies using data visualization and analytics are 23x more effective in customer acquisition.

5. Model Building → Machine Learning (Scikit-learn / TensorFlow)

Machine learning models are built to power recommendation engines:

- Collaborative filtering

- Content-based filtering

- Deep learning models

Real-world impact: AI-driven recommendation systems contribute to 35% of e-commerce revenue, making them a critical business asset.

6. Deployment → Docker + AWS

The final model is deployed using:

- Docker for containerization

- AWS for scalable cloud deployment

This ensures:

- High availability

- Real-time predictions

- Seamless integration with applications

Trend insight: Over 92% of enterprises adopt multi-cloud strategies, emphasizing scalable deployment practices.

The data science landscape in 2026 demands more than theoretical knowledge. Companies are actively seeking professionals who can work across the entire data lifecycle from data collection and processing to machine learning and deployment. Mastering tools like Python, Apache Spark, TensorFlow, and cloud platforms such as Amazon Web Services is essential to stay competitive in the job market.

By building real-world projects like recommendation systems, you not only strengthen your technical skills but also gain practical experience that employers value. The right combination of tools, hands-on practice, and continuous learning will position you for high-growth opportunities in the evolving data science industry.

DataMites Institute offers industry-focused training designed to help learners build practical data science skills. With expert mentors and real-time projects, learners gain hands-on experience aligned with current industry demands.

If you are looking to start your journey, the Data Science Course in Ahmedabad by DataMites provides structured learning, career support, and globally recognized certification to help you succeed in the data science field.