Instructor Led Live Online

Self Learning + Live Mentoring

In - Person Classroom Training

MODULE 1: DATA ENGINEERING INTRODUCTION

• What is Data Engineering?

• Data Engineering scope

• Data Ecosystem, Tools and platforms

• Core concepts of Data engineering

MODULE 2: DATA SOURCES AND DATA IMPORT

• Types of data sources

• Databases: SQL and Document DBs

• Managing Big data

MODULE 3: DATA INTEGRITY AND PRIVACY

• Data integrity basics

• Various aspects of data privacy

• Various data privacy frameworks and standards

• Industry related norms in data integrity and privacy: data engineering perspective

MODULE 4: DATA ENGINEERING ROLE

• Who is a data engineer?

• Various roles of data engineer

• Skills required for data engineering

• Data Engineer Collaboration with Data Scientist and other roles.

MODULE 1: PYTHON BASICS

• Introduction of python

• Installation of Python and IDE

• Python objects

• Python basic data types

• String functions part

• String functions part

• Python Operators

MODULE 2: PYTHON CONTROL STATEMENTS

• IF Conditional statement, IF-ELSE

• NESTED IF

• Python Loops Basics, WHILE Statement

• BREAK and CONTINUE statements

• FOR statements

MODULE 3: PYTHON PACKAGES

• Introduction to Packages in Python

• Datetime Package and Methods

MODULE 4: PYTHON DATA STRUCTURES

• Basic Data Structures in Python

• Basics of List

• List methods

• Tuple: Object and methods

• Sets: Object and methods

• Dictionary: Object and methods

MODULE 5: PYTHON FUNCTIONS

• Functions basics

• Function Parameter passing

• Lambda functions

• Map, reduce, filter functions

MODULE 1 : OVERVIEW OF STATISTICS

• Introduction to Statistics: Descriptive And Inferential Statistics

• a.Descriptive Statistics

• b.Inferential Statistis

• Basic Terms Of Statistics

• Types Of Data

MODULE 2 : HARNESSING DATA

• Random Sampling

• Sampling With Replacement And Without Replacement

• Cochran's Minimum Sample Size

• Types of Sampling

• Simple Random Sampling

• Stratified Random Sampling

• Cluster Random Sampling

• Systematic Random Sampling

• Multistage Sampling

• Sampling Error

• Methods Of Collecting Data

MODULE 3 : EXPLORATORY DATA ANALYSIS

• Exploratory Data Analysis Introduction

• Measures Of Central Tendencies, Measure of Spread

• Data Distribution Plot: Histogram

• Normal Distribution

• Z Value / Standard Value

• Empherical Rule and Outliers

• Central Limit Theorem

• Normality Testing

• Skewness & Kurtosis

• Measures Of Distance: Euclidean, Manhattan And Minkowski Distance

• Covariance and Correlation

MODULE 4 : HYPOTHESIS TESTING

• Hypothesis Testing Introduction

• Types of Hypothesis

• P- Value, Crtical Region

• Types of Hypothesis Testing: Parametric, Non-Parametric

• Hypothesis Testing Errors : Type I And Type II

• Two Sample Independent T-test

• Two Sample Relation T-test

• One Way Anova Test

• Application of Hypothesis Testing (Proposed)

MODULE 1: DATA WAREHOUSE FOUNDATION

• Data Warehouse Introduction

• Database vs Data Warehouse

• Data Warehouse Architecture

• Data Lake house

• ETL (Extract, Transform, and Load)

• ETL vs ELT

• Star Schema and Snowflake Schema

• Data Mart Concepts

• Data Warehouse vs Data Mart —Know the Difference

• Data Lake Introduction architecture

• Data Warehouse vs Data Lake

MODULE 2: DATA PROCESSING

• Python NumPy Package Introduction

• Array data structure, Operations

• Python Pandas package introduction

• Data structures: Series and DataFrame

• Importing data into Pandas DataFrame

• Data processing with Pandas

MODULE 3: DOCKER AND KUBERNETES FOUNDATION

• Docker Introduction

• Docker Vs.VM

• Hands-on: Running our first container

• Common commands (Running, editing,stopping,copying and managing images)YAML(Basics)

• Publishing containers to DockerHub

• Kubernetes Orchestration of Containers

• Docker swarm vs kubernetes

MODULE 4: DATA ORCHESTRATION WITH APACHE AIRFLOW

• Data Orchestration Overview

• Apache Airflow Introduction

• Airflow Architecture

• Setting up Airflow

• TAG and DAG

• Creating Airflow Workflow

• Airflow Modular Structure

• Executing Airflow

MODULE 5: DATA ENGINEERING PROJECT

• Setting Project Environment

• Data pipeline setup

• Hands-on: build scalable data pipelines

MODULE 1 : AWS DATA SERVICES INTRODUCTION

• AWS Overview and Account Setup

• AWS IAM Users, Roles and Policies

• AWS S overview

• AWS EC overview

• AWS Lamdba overview

• AWS Glue overview

• AWS Kinesis overview

• AWS Dynamodb overview

• AWS Athena overview

• AWS Redshift overview

MODULE 2 : DATA PIPELINE WITH GLUE

• AWS Glue Crawler and setup

• ETL with AWS Glue

• Data Ingesting with AWS Glue

MODULE 3 : DATA PIPELINE WITH AWS KINESIS

• AWS Kinesis overview and setup

• Data Streams with AWS Kinesis

• Data Ingesting from AWS S using AWS Kinesis

MODULE 4 : DATA WAREHOUSE WITH AWS REDSHIFT

• AWS Redshift Overview

• Analyze data using AWS Redshift from warehouses, data lakes and operations DBs

• Develop Applications using AWS Redshift cluster

• AWS Redshift federated Queries and Spectrum

MODULE 5 : DATA PIPELINE WITH AZURE SYNAPSE

• Azure Synapse setup

• Understanding Data control flow with ADF

• Data Pipelines with Azure Synapse

• Prepare and transform data with Azure Synapse Analytics

MODULE 6 : STORAGE IN AZURE

• Create Azure storage account

• Connect App to Azure Storage

• Azure Blob Storage

MODULE 7: AZURE DATA FACTORY

• Azure Data Factory Introduction

• Data transformation with Data Factory

• Data Wrangling with Data Factory

MODULE 8 : AZURE DATABRICKS

• Azure databricks introduction

• Azure databricks architecture

• Data Transformation with databricks

MODULE 9 : AZURE RDS

• Creating a Relational Database

• Querying in and out of Relational Database

• ETL from RDS to databricks

MODULE 10 : AZURE RDS

• Hands-on Project Case-study

• Setup Project Development Env

• Organization of Data Sources

• AZURE/AWS services for Data Ingestion

• Data Extraction Transformation

MODULE 1: GIT INTRODUCTION

• Purpose of Version Control

• Popular Version control tools

• Git Distribution Version Control

• Terminologies

• Git Workflow

• Git Architecture

MODULE 2: GIT REPOSITORY and GitHub

• Git Repo Introduction

• Create New Repo with Init command

• Copying existing repo

• Git user and remote node

• Git Status and rebase

• Review Repo History

• GitHub Cloud Remote Repo

MODULE 3: COMMITS, PULL, FETCH AND PUSH

• Code commits

• Pull, Fetch and conflicts resolution

• Pushing to Remote Repo

MODULE 4: TAGGING, BRANCHING AND MERGING

• Organize code with branches

• Checkout branch

• Merge branches

MODULE 5: UNDOING CHANGES

• Editing Commits

• Commit command Amend flag

• Git reset and revert

MODULE 6: GIT WITH GITHUB AND BITBUCKET

• Creating GitHub Account

• Local and Remote Repo

• Collaborating with other developers

MODULE 1 : DATABASE INTRODUCTION

MODULE 2 : SQL BASICS

MODULE 3 : DATA TYPES AND CONSTRAINTS

MODULE 4 : DATABASES AND TABLES (MySQL)

MODULE 5 : SQL JOINS

MODULE 6 : SQL COMMANDS AND CLAUSES

MODULE 7 : DOCUMENT DB/NO-SQL DB

MODULE 1: BIG DATA INTRODUCTION

• Big Data Overview

• Five Vs of Big Data

• What is Big Data and Hadoop

• Introduction to Hadoop

• Components of Hadoop Ecosystem

• Big Data Analytics Introduction

MODULE 2: HDFS AND MAP REDUCE

• HDFS – Big Data Storage

• Distributed Processing with Map Reduce

• Key Terms: Output Format

• Partitioners Combiners Shuffle and Sort

• Hands-on Map Reduce task

MODULE 3: PYSPARK FOUNDATION

• PySpark Introduction

• Resilient distributed datasets (RDD),Working with RDDs in PySpark, Spark Context , Aggregating Data with Pair RDDs

• Spark Databricks

• Spark Streaming

MODULE 1: SPARK SQL and HADOOP HIVE

• Introducing Spark SQL

• Spark SQL vs Hadoop Hive

• Working with Spark SQL Query Language

MODULE 2: KAFKA and Spark

• Kafka architecture

• Kafka workflow

• Configuring Kafka cluster

• Operations

MODULE 3: KAFKA and Spark

• Creating an HDFS cluster with containers

• Creating pyspark cluster with containers

• Processing data on hdfs cluster with pyspark cluster

MODULE 1: TABLEAU FUNDAMENTALS

• Introduction to Business Intelligence & Introduction to Tableau

• Interface Tour, Data visualization: Pie chart, Column chart, Bar chart.

• Bar chart, Tree Map, Line Chart

• Area chart, Combination Charts, Map

• Dashboards creation, Quick Filters

• Create Table Calculations

• Create Calculated Fields

• Create Custom Hierarchies

MODULE 2: POWER-BI Basics

• Power BI Introduction

• Basics Visualizations

• Dashboard Creation

• Basic Data Cleaning

• Basic DAX FUNCTION

MODULE 3: DATA TRANSFORMATION TECHNIQUES

• Exploring Query Editor

• Data Cleansing and Manipulation:

• Creating Our Initial Project File

• Connecting to Our Data Source

• Editing Rows

• Changing Data Types

• Replacing Values

MODULE 4: CONNECTING TO VARIOUS SOURCES

• Connecting to a CSV File

• Connecting to a Webpage

• Extracting Characters

• Splitting and Merging Columns

• Creating Conditional Columns

• Creating Columns from Examples

• Create Data Model

Data engineering can be explained as the practice that involves the design, development, and management of systems and processes to handle and analyze large amounts of data effectively. It emphasizes the creation of reliable data pipelines, ensuring the quality and integrity of data, and enabling data-driven decision-making. Data engineering plays a crucial role in the collection, organization, and utilization of data for various applications and business insights.

Individuals aspiring to pursue a data engineering career in Imphal can take the following steps:

Lay a solid foundation in mathematics, statistics, and programming.

Gain proficiency in programming languages like Python or SQL.

Develop expertise in database management systems and data manipulation techniques.

Familiarize themselves with big data technologies such as Hadoop and Spark.

Enhance their skills through hands-on projects and practical experience.

Certainly, data engineering holds promising prospects for the future. The growing importance of data-driven decision-making and the exponential growth of data volumes have created a strong demand for skilled data engineers. The role of data engineering in handling and processing data efficiently to extract valuable insights and drive business outcomes positions it as a field with abundant opportunities and a bright future ahead.

Undergoing training in data engineering offers several advantages for individuals, including:

Developing valuable skills and knowledge in the field of data engineering.

Expanding career opportunities in diverse industries.

Gaining practical experience through project-based learning.

Keeping abreast of industry trends and technological advancements.

Joining a data engineer course in Imphal typically requires meeting certain criteria that may vary depending on the course and institution. Generally, it is beneficial to have a basic understanding of mathematics, statistics, and programming. Familiarity with databases, SQL, and programming languages such as Python or Java can also be advantageous for a more seamless learning journey.

Prerequisites for enrolling in a data engineer course in Imphal may vary depending on the specific program and institution. However, a basic understanding of mathematics, statistics, and programming concepts is generally beneficial. Knowledge of databases, SQL, and programming languages like Python or Java can also be advantageous.

Individuals considering data engineer training in Imphal should anticipate costs that may vary based on factors like the training institution, program duration, and training mode (online or classroom). Generally, the fees for data engineer training in Imphal can be expected to range from approximately 40,000 INR to 1,00,000 INR.

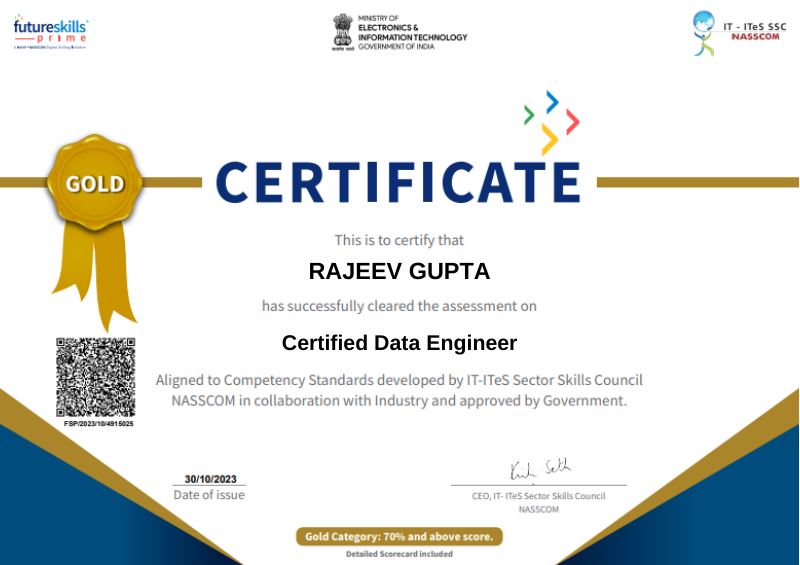

For data engineer training, DataMites comes highly recommended as an institute of choice. They provide a comprehensive curriculum, industry-relevant projects, and experienced instructors who equip students with the essential skills and knowledge required in data engineering.

After undergoing data engineer training, individuals can embark on various career paths, including opportunities as Data Engineers, Database Administrators, ETL Developers, Big Data Engineers, and Cloud Data Engineers. These career options span industries such as technology, finance, healthcare, and e-commerce.

Data engineer job positions are not exclusively limited to individuals with prior experience. Entry-level roles or positions targeted at individuals with limited experience are available in the data engineering field. By participating in internships or gaining practical experience through projects, individuals without experience can showcase their skills and competence to secure data engineer job positions.

Data engineer training adds substantial value by equipping individuals with the skills and knowledge required for success in the field. It encompasses crucial concepts, tools, and techniques involved in developing robust data pipelines, managing databases, and ensuring efficient data processing and analysis. This proficiency is highly coveted in the modern business landscape, where data plays a central role in driving growth, innovation, and competitiveness.

If you're looking to obtain data engineering training in Imphal, one option is to enroll at DataMites. They offer comprehensive courses that cover essential data engineering concepts, tools, and techniques. With a focus on practical learning, hands-on experience, and expert guidance, DataMites provides a strong foundation in data engineering.

The DataMites Certified Data Engineer Training program in Imphal provides instruction in a variety of subjects. These include data engineering concepts, tools, and technologies like Hadoop, Spark, SQL, and data pipeline development. The program also incorporates practical exercises and hands-on projects to ensure a comprehensive understanding and practical application of these topics.

The requirements for enrolling in the Data Engineer Course at DataMites® in Imphal can vary. Typically, individuals with a background in computer science, mathematics, or related fields, as well as professionals aspiring to work in data engineering roles, are eligible to enroll.

Yes, DataMites® conducts classroom training for Data Engineer Courses in Imphal, in addition to their online training offerings. They ensure flexibility by providing individuals the option to select the training mode that suits their needs and preferences.

The instructors for the Data Engineer Course in Imphal at DataMites® have a strong background in data engineering. They possess expertise in data engineering concepts, tools, and industry practices, allowing them to provide valuable instruction and guidance to participants throughout the training program.

DataMites® offers a variety of learning formats for data engineering courses, including instructor-led online training, classroom training, and self-paced learning. These options allow individuals to select the learning format that best meets their preferences and time constraints.

Yes, at DataMites®, individuals are given the opportunity to attend demo classes before they are required to pay the course fee. This allows them to get a preview of the teaching style, course content, and interact with instructors, empowering them to make an educated choice.

Yes, at DataMites®, individuals have the option to pay the course fee in installments. This flexible payment arrangement considers the financial constraints that learners may face, allowing them to manage the course fee while pursuing their data engineering training.

Certainly, DataMites® offers placement support for individuals enrolled in Data Engineer Training in Imphal. They extend valuable assistance in terms of career guidance, resume preparation, interview readiness, and facilitating job placements to enhance participants' career prospects.

DataMites® offers flexible payment options for their training programs, providing learners with convenience and ensuring secure transactions. Accepted payment methods include credit cards, debit cards, net banking, and other digital payment options. Through their online channels, learners can choose the payment method that aligns with their preferences, facilitating a smooth and seamless transaction process while maintaining security.

The expected length of the DataMites Data Engineer Course in Imphal depends on the chosen learning mode. On average, online instructor-led training spans approximately 6 months, with over 150 learning hours. However, the duration may differ for self-paced learning options.

The DataMites Placement Assistance Team(PAT) facilitates the aspirants in taking all the necessary steps in starting their career in Data Science. Some of the services provided by PAT are: -

The DataMites Placement Assistance Team(PAT) conducts sessions on career mentoring for the aspirants with a view of helping them realize the purpose they have to serve when they step into the corporate world. The students are guided by industry experts about the various possibilities in the Data Science career, this will help the aspirants to draw a clear picture of the career options available. Also, they will be made knowledgeable about the various obstacles they are likely to face as a fresher in the field, and how they can tackle.

No, PAT does not promise a job, but it helps the aspirants to build the required potential needed in landing a career. The aspirants can capitalize on the acquired skills, in the long run, to a successful career in Data Science.